Learning Goals

CMSE 492: AI in the Real World: Data, Power, and Society

The Philosophy

AI is not just a technical shift; it is a fundamental restructuring of labor, power, and knowledge. Most MSU graduates will not be training models, but they will be working alongside them. This course is designed to build critical thinking skills and a healthy skepticism by helping you develop the ability to use, evaluate, question, and push back on AI systems.

Each person in this room will have a different view and relationship to AI—some may see it as an essential creative partner, others as a systemic threat. That diversity of perspective is a strength. The goal of this course is not to make you “AI Ready” but to make you AI Critical, so that you are prepared to lead in a workforce where the most valuable skill is knowing when the machine is wrong.

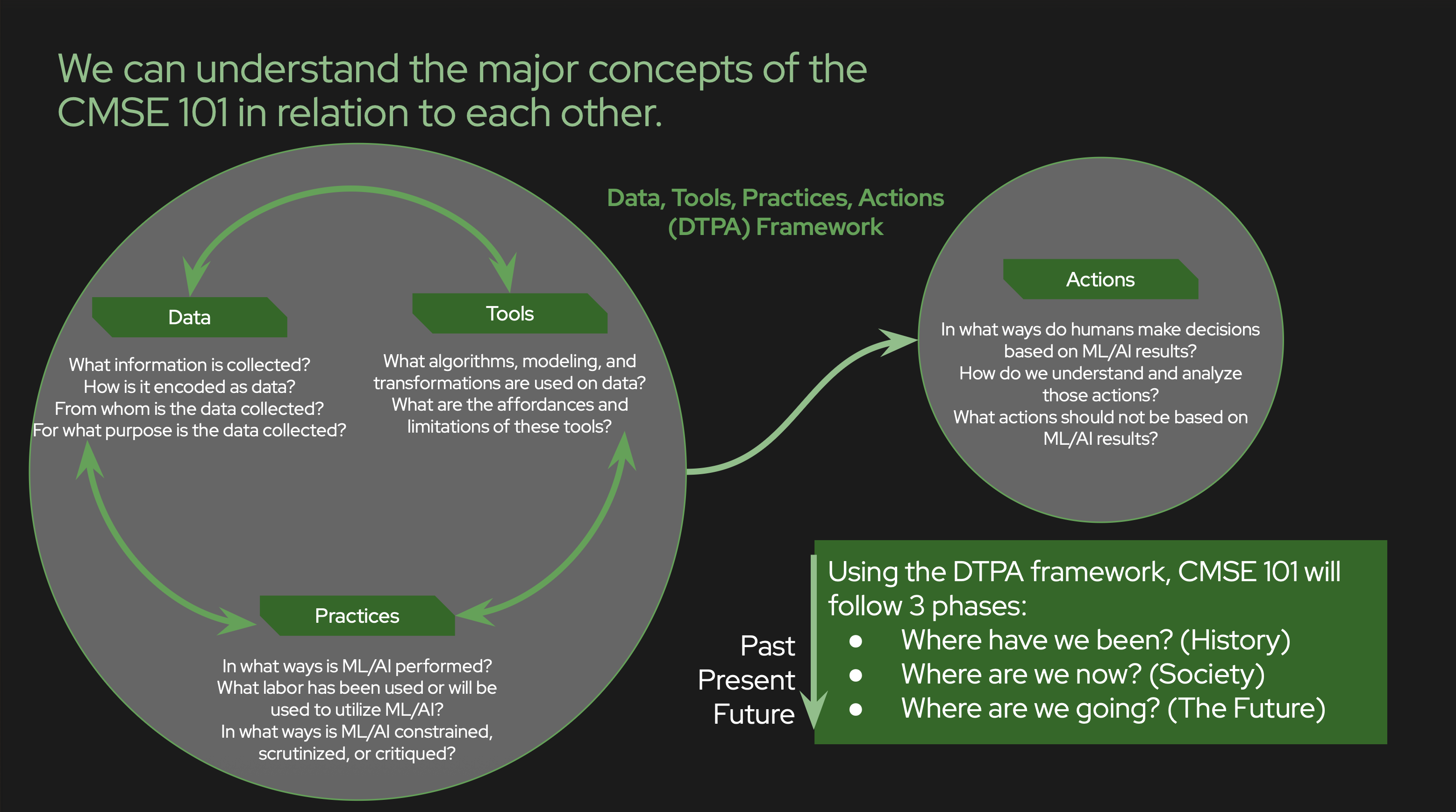

I. The DTPA Framework (Our Core Methodology)

Students will master the Data-Tools-Practices-Actions (DTPA) framework to deconstruct any AI use case. By the end of the course, you will be able to:

- DATA: Deconstruct how information is encoded. You will identify what is collected, who is excluded, and how the technical process of “cleaning” data often erases important information.

- TOOLS: Understand the conceptual math of ML (Classifiers, LLMs, Neural Nets) without needing to code them. You will focus on the “logic of the machine” and the assumptions built into its architecture. You will get a chance to use these tools.

- PRACTICES: Analyze the human-in-the-loop. You will identify who is prompted to make a decision based on the tool’s output and how the interface shapes their choice. You will examine how we use these tools in practice and the trade-offs of different design choices.

- ACTIONS: Evaluate the final outcome. You will determine if the system achieved its stated goal or if it merely automated a historical bias under the guise of efficiency. We will examine what actions are incentivized by the tool and who is empowered or marginalized by its deployment.

II. The Four Pillars of AI Literacy

We measure success by your growth in these four depth-oriented literacies. These are the tools that allow you to hold multiple perspectives at once—navigating the utility of AI while remaining aware of its costs.

- Data Literacy (The Audit): You will gain the ability to interrogate the provenance of data. You won’t just see a spreadsheet; you will see the labor of the people who labeled it and the identities of those it “erased” to make the model work. You will understand how the “cleaning” process of data often erases important information and how the choice of what to include or exclude can reflect and reinforce societal biases.

- Quantitative Literacy (The Logic): You will learn to interpret algorithmic reasoning. This means recognizing when a technical claim is “statistically hollow”—using math to mask a lack of evidence—and understanding the limits of what a model can actually predict. You will examine the energy and labor costs of training models, and examine efficiency claims in the context of these hidden costs.

- Ethical Literacy (The Power Dynamics): We move beyond simple “right vs. wrong.” You will analyze power dynamics: Who is protected by this system? Who is targeted? Who is forced to be its subject? This literacy helps you identify the trade-offs inherent in any high-stakes AI deployment, and to evaluate the ethical implications of design choices.

- Critical Literacy (The Motive): You will learn to challenge the “inevitability” of AI. You will identify the specific financial and political motives behind a technology’s rollout. This allows you to evaluate the “Hype-Bro” narrative of progress while still finding the practical value the tool might offer your specific field. You will examine the narrative that AI is a “miracle” solution to all problems, as well as the narrative that AI is an existential threat, and learn to navigate these extremes with a critical eye.

III. Chronological Inquiry Goals

1. Where have we been? (The Archeology of AI)

- Historical Evolution: Trace the lineage of AI from its mathematical origins to the combined “scientific revolution” and “surveillance capitalism” models of today.

- Co-Evolution: Analyze how data, tools, practices, and actions have evolved in tandem, creating the current “black box” environment.

- Lens Development: Use the DTPA framework to analyze historical case studies to prepare for modern audits.

2. Where are we now? (The Real-World Audit)

- Sector Audits: Conduct deep-dive investigations into AI’s current role in Healthcare, Law Enforcement, Business, and Art.

- Power Mapping: Use DTPA to map who is empowered and who is marginalized in these domains.

- The Hidden Cost: Identify the environmental and labor costs of AI, specifically the “invisible” human labor in the Global South (labeling) and the massive energy/water consumption of server farms.

3. Where are we going? (Envisioning and Regulation)

- Governance vs. Ethics Washing: Critically compare governance approaches, distinguishing between meaningful regulation and corporate-sponsored “ethics boards.”

- Prototyping Equity: Design a values-driven AI use case rooted in community needs, accounting for the full lifecycle of the DTPA framework.

IV. Communication & Action

- Translate the Technical: You will practice communicating AI risks and benefits to diverse audiences (policymakers, community members, or corporate leaders) who may not have a STEM background.

- The Systemic Lens: You will consistently apply a lens that looks at community-level impact rather than just individual user experience.

Perspective Markers

In this course, no document is “neutral.” These Perspective Markers will flag every reading on the syllabus. These help us identify the “motive” of the author:

- [🚀 HYPE]: Claims of “infinite growth” or “miracle” efficiency.

- [🏛️ STATE]: Prioritizes control, monitoring, or institutional “safety.”

- [⬛ BOX]: Technical details are obscured to hide human assumptions.

- [🌳 SYSTEM]: Considers labor, the environment, and social equity.